In part one of this multi-part series we explored the Seagate NAS Pro 16TB NAS device as a NAS solution, but for part two in our series our question was “how to backup the backup device”? Sounds confusing, well, it isn’t actually. So, in part two we explore using a second Seagate NAS Pro as an automated offsite backup solution, at a fraction of the cost of some commercially available “cloud” offerings. In this second of a multi-part article on NAS devices and storage options, we review the recently released Seagate NAS Pro, 16TB Network Attached Storage, powered by the LaCie NAS OS 4 operating system, but this time with NetBackup enabled to use it as a backup device.

Just to recap, in the first of this multi-part article on NAS devices, we reviewed the recently released Seagate NAS Pro, 16TB Network Attached Storage, powered by LaCie NAS OS 4 operating system. As we reported earlier, I had some trouble wrapping my head around the various setup options, but luckily Seagate connected me with Jon Bauder, a knowledgeable (and incredibly patient) tech support engineer who walked me through the various options and strategies. Anyone who has worked with share points and users will find the setup a breeze, with the primary questions referring to more discrete features and strategy. For example, one feature I wanted to try was its Time Machine option. Because Time Machine will fill up any volume until it’s full, and I didn’t want the NAS filled up by my Time Machine, the solution was to create a new User, then create a Share, and then set a quota. In my case 1TB was appropriate for a 750MB source drive. Thus, there was no chance of Time Machine’s daily backups impacting the rest of the NAS’s capacity. These quota decisions are critical, since they impact the storage needs of your offsite backup as well, in this case the second NAS Seagate Pro we are using for backup.

Getting Started

Ok, so how does one get started exploring the creation of your own personal cloud backup using another NAS Pro? The oft-repeated advice of IT Professionals is to use the “cloud” to provide an offsite backup solution. And this is sound advice. Especially if someone should break into your office, studio, or home, and steal your NAS, you are in big trouble. The same concerns are present with more elemental threats, such as fires, floods, or earthquakes. And one of the issues that came to light after the 911 terrorist attacks, was the surprising number of companies whose backup solutions were located in the towers, in their own offices, with no offsite backup, so when they were lost, there wasn’t a backup elsewhere to assist in recovery.

Thus the best solution and best practice is to have an offsite backup as one leg of your backup strategy. One approach is to backup to a hard drive and stash offsite, even if via Sneakernet, Sidewalknet, or Carnet (putting the hard drive in a car and driving it somewhere else to reside till the next backup). This is something we tested using SoftRaid 5, which makes it easy to create a mirror, remove a drive, then reinsert the drive at a later date, which will rebuild and update the mirror and contents. But, this means that there is a gap in backup coverage, as long at that drive is at another location, your data isn’t being backed up. Still it’s a wise strategy – the drive is offline, thus immune to viruses or power surges and corruption, plus you have a bit of a buffer, should your current back become corrupted, the offsite version might be before the corruption occurred. So, having a real time, nightly backup such as offered by a pair of NAS units, makes perfect sense, since it insures you are backed up on a daily basis.

Remember the old rule of backups. How much work and data are you willing to lose? If nothing, then backup continuously. If you feel comfortable losing a few hours of work, then backup a few times a day. And if a month, well I have no answer for that approach; it’s so incredibly dumb. How often you backup is basically gambling, back up often, you aren’t a risk taker, back up every once is a while, you probably spend your free time at the blackjack table. Me, when it come to data, I don't like to gamble, and neither should you.

The “cloud” or “basement” is a great solution for users with a small amount of data, say a few Gigabytes. But for the creative user with Terabytes of data, at the time of this writing, the cost for only 1 TB of Amazon Cloud Drive is $500 for one year, whereas the cost for a 16TB Seagate NAS Pro is $1,199. So, you do the math. Both provide data that can be accessed from anywhere in the world, one offers Enterprise level drive storage, the other uses RAID 5 and NAS specific hard drives, and both are as fast as your Ethernet connection and ISP will allow. Of course, a Enterprise level data center has far more redundancy then a small RAID, and there are other advantages, but we see the high cost as a serious issue, not only for the expense, but because the expense often forces folks to decide what to backup and what to leave unprotected.

That digital storage triage is a problem, since often the data you need isn’t always the data you expect to need, and if the cost is an issue, we often only backup what we think requires backing up. Not a best practices process by any means.

So, when I explored this with Seagate, one answer they proposed was that users should consider a second NAS device as a backup, as their own “cloud”. Initially it was to be the lower cost Seagate NAS, but after two early production run units refused to initialize, Seagate swapped them for another NAS Pro, which worked perfectly out of the box. Still, it’s a bit of overkill to use a NAS Pro unit only for backup, so now that the lower priced Seagate NAS has the initialization issues sorted out, they should be fine, and all the comments in the article should apply to it. Just a thought if you want to save a few bucks. I have to admit having grown fond of having a LCD display on the NAS Pro on the unit for status checks and updates.

A few comments to begin with. When I first started testing the second NAS Pro as a backup to the NAS Pro, I used it on a local network. Why? Well, it was much faster, since I was running a Gigabit network; backing up terabytes of data was significantly quicker. And, as I worked with it and explored the software options, it was helpful to have it local. Originally I thought that I could do the bulk of the data backup locally, taking advantage of the faster network speeds, and then move the device offsite, and let it continue with its automated backups. But, that wasn't in the cards. The unit must be initiated from either offsite, or local, but once local, you would need to erase the data, and start the backup from offsite from scratch. The reason isn’t in the hardware, it’s software, and if there is one feature I’d love to see addressed would be to allow a user to start a local data transfer at a faster data rate, and then offer the user the ability to move the unit offsite, and allow backups to continue. Hint, hint Seagate coders!

Not having to wait for data to backup over the Internet isn’t all that unique a request. In the past when we interviewed vendors and wrote about cloud services, most offered the ability to ship a hard drive with data on it for an almost instant upload to their servers, so that backups start in a few days, rather than days, weeks, or in some instances months. Once that data is uploaded, backups take a few minutes a day, and you are current. Without that option, the user is sort of in data backup limbo, depending on your ISP’s data rate and overhead it might take weeks or even months, or a year or more to back up 16TBs of data via the Internet during which time you have no offsite backup.

A wonderful article by Tony Bradley in Forbes (http://www.forbes.com/sites/tonybradley/2013/07/23/the-myth-of-online-backup/) discussed the case of a photographer who after 6 months of backing up to Carbonite Home, still hadn’t completed the initial 500GB of data, only to discover that Carbonite limited the upload speed to 22gb per day, and slowed it to half that rate after 20OMBs were transferred. The article, written in 2013, is a bit out-of-date in terms of bandwidth, but not by much from our experience, and reinforced the importance of considering your own, far faster backup strategy.

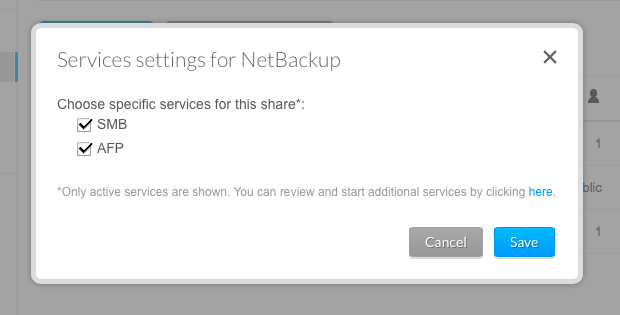

To enable backup services on the Seagate NAS Pro is a piece of cake. You launch the NAS software, and click the option for NetBackup, and then the only question is to decide what data you want to backup, and at what time. I chose to backup the Time Machine partition on my NAS Pro, along with a Music and DVR share, and set the time to 3 am, encouraging this Night Owl to get some sleep for a change. The first time I set it up, running on a local network, it took far less time then I expected, just a few hours for around 6TBs of data transfer. Subsequent backups took only a few minutes each night, and the NAS OS 4 operating system has the option to alert you via e-mail when your backup job starts and is completed, and each morning I’d awake to a message confirming the job’s successful completion. I also enabled the options for SMB and AFP in the Service Settings for the NetBackup dialog box. Creating Shares and Users is very easy and intuitive, with drag-and-drop making it easy to set those settings, a benefit of the updated GUI, with its friendlier menu system.

How to best use NetBackup is by careful examination of one’s own personal or office workflow. I have a lot of large files, but allowing unchecked dataset expansion is a serious concern. Turn your back and you could suddenly be out of room. As previously mentioned, I faced with with Time Machine, which will just fill up any volume you point it at, until facing a finite amount of space, it starts to delete old backups. So, I took advantage of quotas on the NAS Pro for my initial setup, and my backups on the NAS Pro backup system respected those quotas, which is to say if my quota was set to 1TB, the backup system would only capture data up to that source’s quota limit, insuring that the backup wouldn’t run out of space. There are some things I’ve yet to complete my configuration to backup Adobe Photoshop 6 catalog backups. Why? Well, even my friends at Adobe recommend not using the Lightroom 6 backup option, since it doesn’t backup changes to files, only the original source, which means that all your work to the files isn't backed up! So, using an App like Carbon Copy Cloner, or SuperDuper, allows one to back it up and automate. So, my next step will be to create a share on the NAS Pro, backup the CCC backup, and thus start a system to create a nightly backup. I’ll write an article about the process, so wish me luck!

While some folks find assurance in utilizing an external data center as a “cloud” solution, for folks working with a great deal of large files, and creative content, the “cloud” might prove more of a hassle then they might expect. We tested a large provider’s backup utility, and realized that any thought of retrieving a set of files was almost an illusion. It was insanely slow; it wasn't the sort of Finder operation a user might expect. By comparison, dragging a file or folder of files from the Seagate NAS backup was immediate and instantaneous, and would probably save the day if the need arose. The cost factor also matters here since for a fraction of the cost, a user can backup a significant amount of data, 12 TBs in the case of the 16TB NAS Pro, counting the RAID 5 hit on the total amount of available space. As mentioned previously, looking at Amazon’s pricing structure, to backup 16 TBs of data would run a heart stopping $8,000 grand a year. Say we only backup 12TBs of data, the price would still be $6,000 grand a year. Compared to roughly $1,200 for a NAS Pro for offsite backup, with no additional cost after that.

To test the offsite backup routine I brought the unit over to a friend’s house, plugged it in to the network router, and powered it up and it set to work. No fuss and certainly no muss. I used a pair of Cyberpower UPS units with both NAS Devices, to provide battery backup and surge protection. And it took some time to backup over the net. About a week for the initial backup, and a few minutes each night once the backup was complete. I noticed that the data load my friends placed on their network impacted the backup speed at times, but surprisingly much less then I expected. Lots of movies over a weekend and late hours, and times increased slightly, but during the day when they were at work, times decreased. Normally you might schedule those backups for time when all should be asleep, such as 3 am. You could also place one in your office, and using Verizon FIOS, we found the backup times nothing to worry about once the initial backup was in place. Now, if they could just tweak the NAS OS to allow for a local backup, followed by a move of the UPS to an offsite location! Once the backup is enabled, it is entirely automated, if it weren’t for the status e-mails I’d have no idea it was backing up the primary NAS Pro. It was also stable, and once I received a note that there was new Firmware available, it worked quietly and quickly. All updated properly, with no extra effort.

For users seeking an easy-to-use but extraordinarily powerful backup NAS solution and their own personal “Cloud”, the Seagate NAS Pro offers tremendous storage, power, and easy-to-configure, yet fully featured software that will meet the needs of both consumers and professionals, all at an affordable price. The updated NAS OS 4 operating system is that rare combination of flexibility and depth, one can use it very simply, or take advantage of its depth to meet the needs of the IT professionals. One thing that struck me after several months of use is just how stable and reliable the system was. And… it was invisible; it just worked, which to us is the highest possible compliment. And unlike most commercial “cloud” solutions, it was a file-based system, so if you need a file, you can copy it via a finder drag and drop. NetBackup is easy, intuitive, and able to save the day in an emergency. By comparison most "cloud" backups are designed to restore data, not quite the same thing, so that if you need to restore an individual file, it's an entirely different experience.

For any user seeking a way to create their own offsite or local backup of their NAS Pro, having a second Seagate NAS Pro with NetBackup enabled as a personal, professional “Cloud” for either office, home, or studio comes highly recommended.

Our next article in this series, part three, explores what happens when things go seriously wrong, and you need a recovery service. A worst-case scenario, but they happen. Fortunately we found that our stress level dropped due to the work of Seagate Recovery Service, so stay tuned for our recovery saga!

Harris Fogel, with editorial input by Nancy Burlan & Ken Kramar, posted 5/24/2015

For more information on the Seagate NAS Pro visit: www.seagate.com